CloudFlare, SEO and building my own caching proxy with Nginx

by Alex Yumashev ·

Updated Sep 10 2019

by Alex Yumashev ·

Updated Sep 10 2019

TL;DR I spent the last month testing how CloudFlare affects my organic traffic by turning it off and on again™ and measuring the ranking changes. Looks like CF hurts SEO. So we've built our own caching proxy with blackjack and hookers AWS and nginx, while saving a couple of hundred $ a month on the way.

Part 1: CloudFlare and SEO

If you use CloudFlare your website shares the IP address and the SSL-certificate with hundreds (on a free plan) or dozens (on a paid plan) of other websites. Usually this shouldn't be a problem, unless you have some really shady neighbors.

I've been using CloudFlare's paid plan since forever, caching everything, including HTML, to have lightning fast TTFB (time to first byte). But after reading three blog posts and one Reddit thread about CloudFlare ruining SEO because of bad IP neighborhood, I decided to run a quick check.

I did a reverse IP lookup for my website's CloudFlare-assigned IP address using several independent tools. And it turned out, that out of 16 websites sitting on the same address, 7 (!) were hosting Chinese porn.

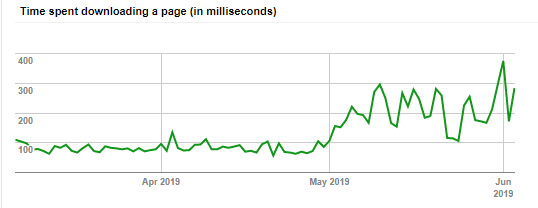

That didn't look good. I decided to disable CloudFlare and wait for three weeks to see what happens. And even though the performance had obviously degraded (see the picture, I disabled CF in early May) the rankings have slightly increased.

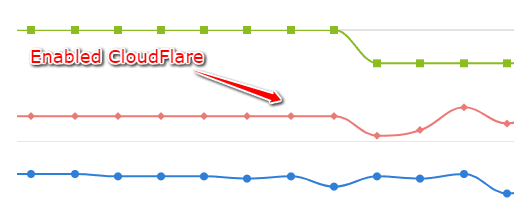

We decided that this minor improvement was not worth the increased load on the servers (hosted on AWS by the way) and turned CF back on. And that's when all my rankings dropped by 2-8 positions overnight, and kept dropping ever since. Further switching it off and on again™ had a very clear correlation with my organic traffic.

The drop after enabling CF back

After talking to CloudFlare support and discovering that our only option to get rid of the shady sites on our IP was either upgrading to the crazy $3500/month pricing plan OR moving the site to a new account hoping there won't be any malicious neighbors (and keep registering new accounts if there were), we eventually decided to remove CloudFlare permanently. Even if the fluctuations were just a coincidence - I simply want to exclude this variable from the equation.

Part 2: building your own caching proxy

Essentially a CDN like CloudFlare does three things for you:

(1) it caches and optimizes content, reducing the traffic bills and improving the page speed, including TTFB (time to first byte) which is a ranking factor

(2) it protects the upstream server using a "web application firewall" (WAF)

(3) it serves the cached content from the datacenter that is nearest to the user.

All of these can be solved by building your own caching reverse-proxy server. Except for the number (3).

Number (3) is the only one you can't build yourself. But number (3) has its downsides too. You see, CloudFlare datacenters operate independently, caching content separately (confirmed with CF support). So if someone in Australia has loaded "foobar.html" from your website, this page is still not cached for users in Sweden, California, the Netherlands or whatnot - the CDN will still poke your upstream server again and again to get "foobar.html" for these countries. And the irony here is - the more datacenters a CDN adds to its network (currently CloudFlare has around 180) the less effective the caching becomes (as the number tends to infinity, we'll get to an absurd extreme where every user gets their own caching datacenter, making caching 100% useless :) ).

That is why I'm basically fine without the "N" in my "CDN".

So I ordered a 4GB 2-core server from AWS for $20 a month (which is 10 times cheaper than CloudFlare's "business" plan by the way and comes with a dedicated IP), installed nginx and configured it as a reverse proxy in front of my "origin" server. The proxy caches everything (not just static content) for 14 days, it gzips and minifies content, and WAF is handled by naxsi. Here's the nginx config with my comments:

# enable caching, use 10MB of RAM for keys, purge cached files after 10 days

proxy_cache_path /tmp/nginx levels=1:2 keys_zone=my_zone:10m inactive=10d;

proxy_cache_key "$scheme$request_method$host$request_uri";

server {

listen 443 ssl;

# my ssl certificates

ssl_certificate /etc/ssl/cert.chained.crt;

ssl_certificate_key /etc/ssl/key.rsa;

server_name www.jitbit.com;

location / {

# enable caching

proxy_cache my_zone;

# adds a "HIT/MISS" cache header

add_header X-Proxy-Cache $upstream_cache_status;

# ignore these headers, lets effing cache everything

proxy_ignore_headers Cache-Control Set-Cookie Expires;

# http status codes to cache for 14 days

proxy_cache_valid 200 301 14d;

# we can invalidate a page by sending "my-secret-header:true"

proxy_cache_bypass $http_my_secret_header;

include proxy_params;

# "www.jitbit.com" points to upstream server in /etc/hosts

# also we don't use SSL here to save performance

proxy_pass http://www.jitbit.com;

}

}

"But wait, you're still paying for traffic!" - that's the best part: I ordered a "LightSail" server, not a regular EC2 from Amazon. These servers come with some free traffic included and my $20 plan includes whooping 4 terabytes per month, which is more than enough for me. Enabling VPC-peering should even exclude the upstream traffic from this allowance, but I still haven't played with that.